Entertainment

Kelly Brook Returns to Loose Women in Stunning Floral Dress After Holidays

Kelly Brook, 46, made a glamorous return to Loose Women on Monday, wearing a red and blue floral dress. She shared her joy on Instagram after holidays with husband Jeremy Parisi.

Politics

Gwent Police Faces Legal Action Over Transgender Toilet Policy

Gwent Police is threatened with legal action for allowing transgender officers to use women's toilets, contradicting government guidance on single-sex spaces based on biological sex.

Sports

Anthony Joshua's Comeback Fight Venue Changed to Jeddah Superdome

Anthony Joshua's fight against Kristian Prenga on July 25 has been moved from Riyadh to the Jeddah Superdome, setting up a potential clash with Tyson Fury.

Crime

Paddleboarder and Dog Rescued from Cliff During Lightning Storm

A woman and her dog were rescued from a cliff base during a severe lightning storm after her paddleboard deflated, leaving them stranded in treacherous conditions.

Health

Environment

Thousands Flock to U-M Historic Peony Garden

Tens of thousands visit the University of Michigan's W.E. Upjohn Peony Garden annually to see one of North America's largest collections of historic herbaceous peonies.

Louisiana Balloon Release Ban: Fines Up to $2,500

Louisiana has passed a law banning intentional outdoor balloon releases, effective August 1. Violators face fines up to $2,500 and community service. Environmentalists support the ban, while some critics argue it hinders grief expression.

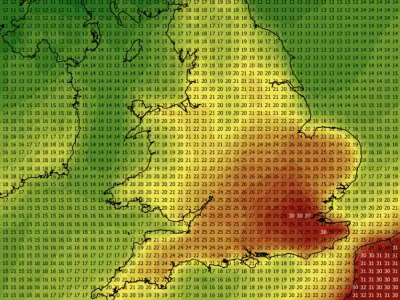

England and Wales Warmest Spring on Record

England and Wales recorded their warmest spring since 1884, driven by an exceptional late-May heatwave. Nine of the ten warmest springs in England have occurred since 2007.

Spring 2025 Hottest on Record in England and Wales

Spring 2025 was the warmest in England and Wales since records began in 1884, with a mean temperature of 10.41°C. Nine of the ten warmest springs have occurred since 2007.

Shorebirds Thrive on Lindisfarne: Terns and Plovers

Conservation efforts on Lindisfarne's Holy Island have led to a resurgence in tern and plover populations, showcasing successful shorebird protection strategies.

Weather

Get Updates

Subscribe to our newsletter to receive the latest updates in your inbox!

We hate spammers and never send spam

Tech

UK Geography Quiz: Test Your Knowledge

Challenge yourself with 100 multiple-choice questions about the geography of the United Kingdom. Perfect for students, travelers, and geography enthusiasts.