Boston Dynamics Unveils AI-Powered Spot Robot with Advanced Reasoning Capabilities

Leading robotics manufacturer Boston Dynamics has announced a significant artificial intelligence upgrade for its iconic four-legged Spot robot. The company has integrated Google DeepMind's latest Gemini Robotics-ER 1.6 AI model, transforming the robot's capabilities from simple programmed responses to sophisticated autonomous reasoning.

From Manual Control to Autonomous Reasoning

The integration represents a fundamental shift in how robots interact with their environment. Boston Dynamics engineers describe the AI implementation as "identical" to having a human operator manually controlling the Spot robot, but with the crucial difference that the robot can now interpret and execute tasks independently.

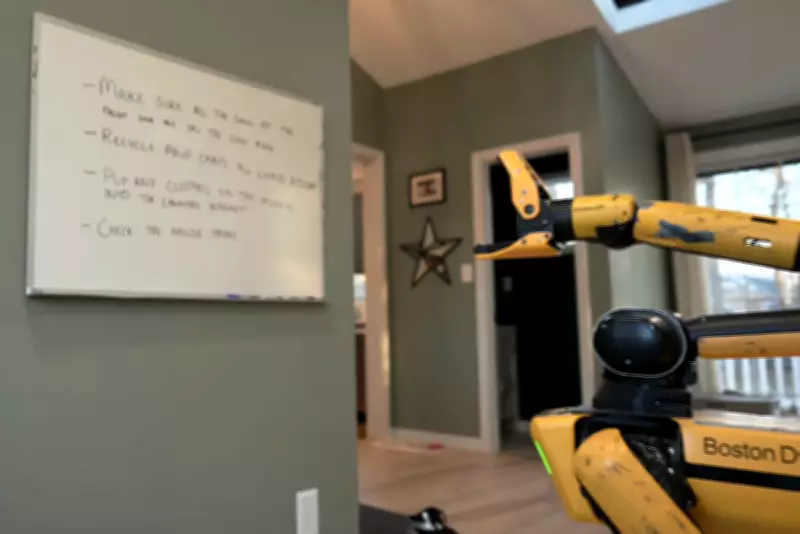

Demonstrations showcase the robot's new abilities:

- Reading task instructions from a whiteboard before executing them

- Performing household chores including tidying up spaces

- Recycling cans and checking mouse traps for rodents

- Walking a real dog on a leash and throwing balls for play

Natural Language Interaction Revolution

The most significant advancement comes in how humans can now interact with the robot. Previously requiring complex programming and code input, the upgraded Spot robot responds to natural language commands. This breakthrough fundamentally changes the engineer's role from writing intricate code to setting goals and objectives through conversational instructions.

"Robots like Spot are already extremely capable of navigating complex and changeable environments, collecting data and sensor readings, and manipulating objects," explained Spot engineer Issac Ross. "This demo points to a future where users can rely more on natural language to guide Spot's actions, rather than complex code."

Embodied Reasoning: Bridging Digital and Physical Worlds

The Gemini AI model provides what developers call "embodied reasoning" - the ability for robots to understand physical spaces and make decisions based on real-world context. This represents a crucial advancement in robotics, allowing machines to move beyond simple instruction-following to genuine problem-solving in dynamic environments.

"Capabilities like instrument reading and more reliable task reasoning will enable Spot to see, understand, and react to real-world challenges completely autonomously," said Marco da Silva, general manager of Spot at Boston Dynamics.

Google DeepMind researchers Laura Graesser and Peng Xu emphasized the importance of this development in their blog post: "For robots to be truly helpful in our daily lives and industries, they must do more than follow instructions, they must reason about the physical world. By enhancing spatial reasoning and multi-view understanding, we are bringing a new level of autonomy to the next generation of physical agents."

Commercial Applications and Future Potential

Boston Dynamics serves as one of only a few "trusted testers" for Google's Gemini AI models, though commercial availability timelines remain undisclosed. The company has reached out for additional information regarding market release plans.

Previous versions of Spot have already found applications in diverse settings including car manufacturing plants and rocket launchpad sites. The new reasoning capabilities, combined with natural language interaction, open possibilities for domestic environments where robots could assist with everyday tasks and household management.

The integration represents a significant step toward creating robots that can operate independently in complex, changing environments while responding to human needs through intuitive communication methods.