AI Transforms Military Strategy with Rapid Decision-Making in Iran Strikes

Academics and defence experts are raising alarms as artificial intelligence collapses the time required for military decision-making, heralding a new era of bombing operations that outpace human thought. The recent barrage of US and Israeli strikes on Iranian targets, which reportedly utilised AI tools like Anthropic's Claude model, underscores a shift towards automated war planning that could marginalise human oversight.

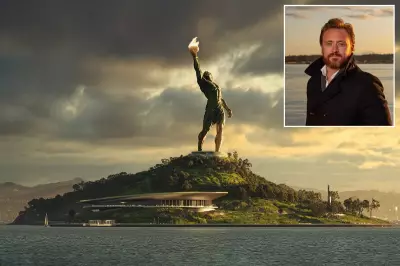

Speed and Scale of AI-Powered Strikes

In the initial 12 hours of the conflict, nearly 900 strikes were launched against Iranian targets, including a missile attack that killed Iran's supreme leader, Ayatollah Ali Khamenei. This rapid escalation was facilitated by AI systems that shorten the "kill chain"—the process from target identification to legal approval and strike execution. Craig Jones, a senior lecturer in political geography at Newcastle University, noted, "The AI machine is making recommendations for what to target, which is actually much quicker in some ways than the speed of thought." He emphasised that such scale and speed enable simultaneous assassination-style strikes and decapitation of regime responses, tasks that historically took days or weeks.

Technological Integration and Ethical Concerns

The US Department of War and other national security agencies have deployed Claude, developed in partnership with Palantir and the Pentagon, to enhance intelligence analysis and decision-making. These AI systems rapidly analyse vast data sets, including drone footage, telecommunications interceptions, and human intelligence, using machine learning to identify targets, prioritise them, recommend weaponry, and evaluate legal grounds. However, David Leslie, a professor of ethics, technology, and society at Queen Mary University of London, warned of "cognitive off-loading," where humans may feel detached from the consequences of strikes as machines handle the cognitive effort.

Humanitarian Implications and Global Context

A missile strike in southern Iran, which killed 165 people, many of them children, and hit a school near a military barracks, has drawn condemnation from the UN as a "grave violation of humanitarian law." While the US military investigates these reports, it remains unclear what AI capabilities Iran possesses, though it claimed in 2025 to use AI in missile-targeting systems, albeit hampered by international sanctions. In contrast, the US and China lead as AI superpowers in military applications.

Policy Shifts and Future Deployment

Prior to the Iran strikes, the US administration announced plans to phase out Anthropic's AI from its systems due to restrictions on autonomous weapons and surveillance of US citizens, but it remains in use temporarily. Meanwhile, OpenAI has secured a deal with the Pentagon for military use of its models. Prerana Joshi, a research fellow at the Royal United Services Institute, highlighted that AI deployment is expanding across defence estates globally, improving productivity and efficiency in logistics, training, and decision management by synthesising data at unprecedented speeds.

As AI continues to reshape military operations, experts stress the need for robust human oversight to prevent automated systems from undermining ethical and legal standards in warfare.