The Overlooked Threat: How AI Could Hollow Out Higher Education

Public discourse surrounding artificial intelligence in universities has fixated on a singular concern: academic dishonesty. Will students employ chatbots to compose essays? Can educators detect AI-generated work? Should institutions prohibit or embrace this technology? While these questions merit attention, they obscure a more profound transformation already reshaping higher education far beyond classroom misconduct.

The Pervasive Integration of AI Across University Operations

Educational institutions are implementing artificial intelligence across numerous facets of institutional functioning. Some applications operate invisibly, including systems that allocate resources, identify students at risk of dropping out, optimize course timetables, and automate routine administrative determinations. More visible implementations include students utilizing AI tools for summarization and study assistance, instructors employing them to construct assignments and syllabi, and researchers leveraging them for coding, literature reviews, and compressing hours of tedious labor into minutes.

While individuals might misuse AI to circumvent academic integrity or avoid work responsibilities, the extensive adoption of artificial intelligence in higher education prompts a more fundamental inquiry: As machines grow increasingly proficient at performing research and learning tasks, what becomes of higher education's essential purpose? What role does the university serve in this evolving landscape?

Three Categories of AI Systems and Their Educational Impacts

Examining three distinct classifications of artificial intelligence systems reveals their respective influences on university environments:

Nonautonomous AI SystemsThese technologies, already deployed in admissions evaluation, procurement, academic guidance, and institutional risk analysis, automate specific tasks while maintaining human oversight. The person remains "in the loop," utilizing these systems as instruments. Such implementations present risks to student privacy and data security, potential algorithmic bias, and frequently insufficient transparency regarding problem sources. Universities typically possess compliance offices, institutional review boards, and governance mechanisms designed to address these concerns, though their effectiveness varies.

Hybrid AI SystemsThis category encompasses tools like AI-assisted tutoring chatbots, personalized feedback mechanisms, and automated writing support, often relying on generative AI technologies and large language models. While human users establish overall objectives, the intermediate steps systems undertake frequently remain unspecified. Hybrid systems increasingly shape daily academic activities, with students employing them as writing companions, tutors, brainstorming partners, and on-demand explainers, while faculty use them to generate rubrics, draft lectures, and design syllabi.

This domain appropriately hosts the "cheating" conversation, as both students and educators increasingly depend on technological assistance. However, hybrid systems also raise more intricate ethical questions regarding transparency, accountability, intellectual credit, and cognitive offloading. Natural-language interfaces make distinguishing between human and automated interactions challenging, potentially creating alienation and distraction. Furthermore, when instructors use AI to draft assignments and students employ AI to formulate responses, questions arise about who conducts evaluation and what precisely undergoes assessment.

Autonomous AI AgentsThe most consequential transformations may emerge from systems resembling agents rather than assistants. While truly autonomous technologies remain aspirational, the prospect of "researcher in a box" systems capable of independently conducting studies grows increasingly plausible. Such agentic tools promise to liberate time for work emphasizing human capacities like empathy and problem-solving. In teaching, this could mean faculty maintain headline instructional roles while delegating more day-to-day teaching labor to efficiency-optimized systems.

The Erosion of Learning Ecosystems and Mentorship Structures

Universities fundamentally operate as practice systems rather than information factories, relying on pipelines of graduate students and early-career academics who learn to teach and research through participation in that same work. If autonomous agents absorb more "routine" responsibilities that historically served as entry points into academic life, institutions might continue producing courses and publications while gradually diminishing the opportunity structures that sustain expertise development over time.

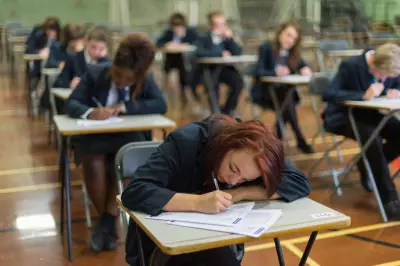

For undergraduate students, similar dynamics apply differently. When AI systems can supply explanations, drafts, solutions, and study plans on demand, the temptation emerges to offload learning's most challenging aspects. The educational technology industry might characterize this type of work as "inefficient," suggesting students benefit from machine handling. However, cognitive psychology demonstrates that intellectual growth occurs precisely through the labor of drafting, revising, failing, reattempting, grappling with confusion, and refining weak arguments—this constitutes learning how to learn.

Reconceptualizing the University's Purpose in an Automated World

Collectively, these developments indicate that automation's greatest risk in higher education involves not merely task replacement by machines, but the gradual erosion of the broader practice ecosystem that has long sustained teaching, research, and learning. This reality presents an uncomfortable inflection point: What purpose do universities serve when knowledge work becomes increasingly automated?

One perspective treats universities primarily as credential and knowledge production engines, focusing on output metrics like graduation rates and research publications. If autonomous systems can deliver these outputs more efficiently, institutions possess compelling reasons for adoption.

An alternative viewpoint recognizes higher education as more than an output machine, acknowledging that its value resides partly within the ecosystem itself. This model assigns intrinsic worth to opportunity pipelines through which novices become experts, mentorship structures cultivating judgment and responsibility, and educational designs encouraging productive struggle rather than optimizing it away. Here, what matters extends beyond whether knowledge and degrees are produced to include how they are generated and what kinds of individuals, capabilities, and communities form during the process.

In a world where knowledge work itself grows increasingly automated, universities must confront what higher education owes its students, early-career scholars, and the broader society it serves. The answers will determine not only how artificial intelligence becomes adopted, but ultimately what the modern university transforms into.