The AI Anxieties of a Trainee Teacher: Cheating Machine or Powerful Assistant?

At 39, I embarked on training to become a school English teacher, aiming to help young people develop as readers, writers, and thinkers with a deeper connection to literature. After 15 years as a freelance writer and novelist, I felt confident in my abilities. Yet, as my training progressed, one question haunted me: what to do about artificial intelligence? The immediate dilemma was clear: with all pupils having access to free online chatbots that produce fluid, complex prose on demand, what does this mean for English instruction?

This query sits atop a pile of timeless pedagogical quandaries: What are we trying to achieve in school? How should we go about it? How do we measure success? As a newcomer, navigating these issues for the first time, throwing AI into the mix felt like downing a coffee in the middle of a panic attack.

The Heated Debate: Rejectionists vs. Cheerleaders

I frantically sought perspectives on AI and the English classroom through pedagogy podcasts, Substacks, and YouTube channels. My algorithmic feeds catered to this interest, serving an endless supply of content—including tech company ads—promising to help me think through these urgent questions. I quickly learned this was a world of heated, often acrimonious, debate.

On one side were the AI rejectionists: teachers and education pundits who view AI as an existential assault by rapacious tech companies on classroom activities. They argue students need to learn how to push through difficulty, read complex texts, and develop arguments, embracing the friction and uncertainty of these processes. Access to a one-click writing machine, they contend, makes it too easy to run away from these challenges.

Rejectionists share horror stories of students handing in AI-generated papers they cannot discuss, citing nonexistent sources "hallucinated" by chatbots. They cite studies suggesting chatbot use dulls reasoning faculties or impedes brain development, and raise ethical concerns about environmental costs, reliance on copyrighted writing, and big tech's oligarchal leanings. Their solution is to build AI-free classrooms, shifting toward in-class essays written by hand and reviving oral tests.

On the other side were the AI cheerleaders—not the tech execs predicting the end of schooling, but teachers and pundits who argue AI carries great pedagogical potential. They see chatbots as powerful assistant teachers, able to engage every student simultaneously, provide personalised feedback, and nudge each down their learning path. From this perspective, rejectionists' instinct to shun AI represents a lack of understanding and does a disservice to students who need tech skills for university and careers.

Classroom Observations and Internal Conflicts

As I waded through these arguments, my anxiety grew. Teachers, including myself, fear doing the "wrong" thing—using ineffective strategies or failing students. We believe good teachers can change lives, and bad ones can leave marks, especially in English, where they might contribute to "readicide." Beneath this fear is a more fundamental one: being seen as out-of-touch losers hiding in the classroom.

I resolved not to get suckered by tech hype but also not to refuse potentially useful tools. I needed a provisional ruling: what AI meant for my high-school English classes, not its broader implications for education. Last spring, I observed a veteran English teacher, Emily, in a Chicago suburb school. What I saw disposed me to join the rejectionists.

I witnessed disruptive effects: fully AI-generated papers, hallucinated quotes, and tense student-teacher conversations about proof. Emily and I stressed over ambiguous cases, trying to sort student nonsense from AI nonsense. I'd become a teacher to honour young people's writing with close attention, but AI's presence interfered, creating despair as I tried to divine papers' origins.

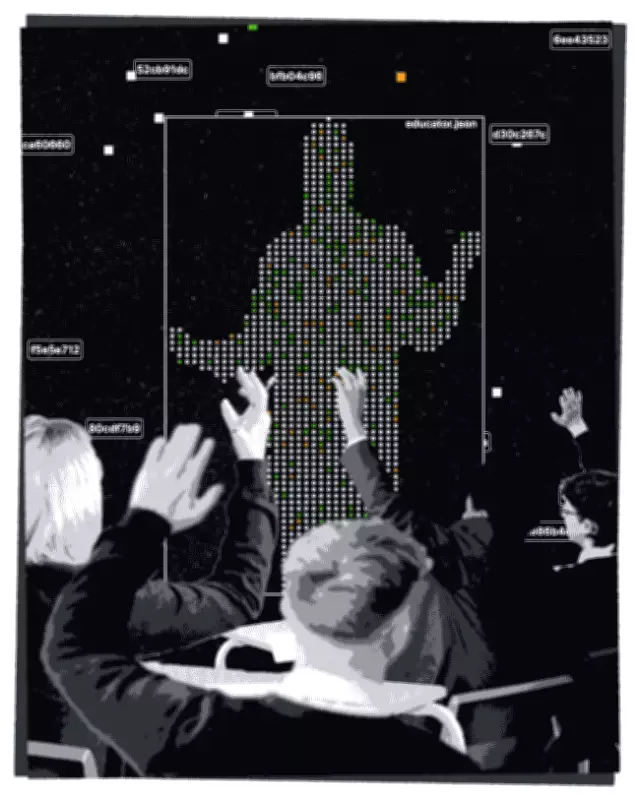

Emily's students had school-issued laptops, and her computer allowed her to surveil their screens in a grid recalling CCTV monitors. Some students didn't use AI in class; others turned to it reflexively. One student habitually put every new subject into ChatGPT for notes. Often, students got funnelled toward AI even when not looking for it, such as clicking "Dive deeper in AI mode" after Googling a topic.

Emily assigned most reading in class, reading much aloud, especially early in the year. This was dismaying, contrasting with my romantic visions of leading students into literary complexity. What did it mean that many students seemed unequipped to read independently and turned reflexively to AI? I wondered if unstoppable forces were wiping out what I'd signed up for.

But watching Emily read to the class lifted my spirits. Reading time was sometimes magic. With laptops and phones away, students engaged with All Quiet on the Western Front, transforming it from an imposing monolith into a familiar companion. They gasped at dramatic turns and wondered why characters acted as they did. The room crackled with energy between students, teacher, and words written a century ago.

The AI shenanigans had been depressing; the AI-free teaching inspiring. After leading some readings myself, I felt ready to scream: I'm an AI rejectionist—and proud! Over summer, doubts crept back. Reading time hadn't answered my AI questions. As a student teacher in fall, I had to decide what students would write, when, and how.

Testing AI and Seeking Balance

I staged an internal debate. Reading together without devices felt great, but did every student learn as much as possible? What if, after AI-free reading, students had chatbots giving personalised feedback aligned with my goals? As a teacher, I give feedback, but time is limited. Why not let AI help, especially when students are stuck at home?

In the name of due diligence, I tested AI chatbots, including those with "student mode." First, I evaluated their ability to do the Worst Thing: generate writing indistinguishable from student work with instructions like "sound like a 15-year-old" or "insert realistic typos." In 2023, it was reassuring to think machine writing was instantly detectable; now, that's no longer the case.

Next, I tested chatbots on less poisonous uses, like commenting on drafts or answering assignment questions. Some were very good, even impressing me with feedback on my magazine pieces. I felt imaginary cheerleaders claiming victory. Yet, I kept returning to memories of reading time in Emily's classroom.

Part of what felt special was how it structured attention. With devices away, the possibility of paying attention was always close, without distractions from bright, scrollable screens. It was good to enforce separation between learning and tech temptations. My reflex was to apply this to writing processes.

Can chatbots give useful feedback? Maybe. Can their use be regulated? Probably. Can they be ordered not to offer one-click rewrites? Yes. But every high-school student knows these labour-saving options are a click away on the public internet. I couldn't wipe chatbots from their world, only decide how much to steer students toward them.

Teaching as an "Impossible Profession"

Teaching, according to Freud, is one of the "impossible professions," where total success is never assured. Through fall, I reminded myself of this, trying to feel better about my uncertainty. When I devoted class time to reading, it felt great, but I worried I was doing too much. When students wrote essays in class, I felt virtuous for banishing tech's shortcut machine, yet worried I wasn't exposing them to the frustrations and pleasures of drafting over time.

When I set ambitious assignments with unsupervised time, I felt virtuous again, but visions of students pasting instructions into ChatGPT invaded my mind. I tried creating outside-the-box assignments to spark interest, like selecting soundtracks for book-based movies or writing satirical essays. I loved reading these, learning about students' understanding and lives, but couldn't stop worrying.

Maybe chatbots could have helped. For every assignment, I caught a few using them to cheat, citing time pressure and misunderstanding. I implored them to ask me, but wondered: if I'd trained a chatbot to answer questions my way, might fewer have cheated? Might their writing have improved? Or would more have traipsed down the path to cheating? The decisions felt impossible.

Direct Conversations and Future Directions

Besides reading, talking directly about AI felt relatively safe from doubts. I explained my thinking (including uncertainty) and solicited students' thoughts. Questionnaires revealed varied AI use: some never used it, others for flashcards, test reviews, advice, or editing. Almost everyone expressed fear AI could erode original thought, though some examples of "responsible" usage—like having AI generate thesis statements or outlines—seemed to bypass that cultivation.

One student admitted using AI to complete assignments he deemed not worth his time, and his father expressed concern about AI fluency for future employment. Contextual knowledge about AI was low; no student could explain how chatbots generate text without a screen. I shared an email about a lawsuit against an AI firm for using copyrighted writing, prompting silence.

So I tried to talk about it, feeling awkward but good as attention shifted to higher gear. In future, I'll seek more opportunities to bring AI into the classroom, maintaining caution about AI tools. I want students to think critically about all language, including in ads, speeches, and social media. If language machines interface with their world, they should ask questions about the machinery, business models, low-wage workers, and risks like self-harm or environmental impacts.

Finding Peace in Uncertainty

On my last day, grading younger students' short stories inspired by unit themes, I allowed digital submission but also in-class work and meetings. Only one or two had obviously used chatbots, which did a serviceable job. Overall, I was delighted by inventiveness and understanding, especially how many drew on Mark Twain's The Mysterious Stranger, with Satans mirroring chatbot behaviour—offering to do homework or polish work, without my prompting.

Reading these stories was a joy, mostly uncomplicated by AI anxieties. The biggest threat was solicitations from AI tools embedded in my software, asking to grade or categorise work. I didn't want that; I wanted to read what students wrote. I'd told them writing is a gift for knowing ourselves across time—what would it mean to hand response to an algorithm? I printed stories and shut my computer.

Did I catch every instance of cheating? Surely not, and some teachers might shake their heads at my naivety. But I knew my students; I'd watched their drafts and made them explain stories to my face. That counted for something. I felt at peace, having done what I thought right for the semester. In future, the approach will change unpredictably—that, too, is the job. I picked up my pen and began to read.