Innocent Man Takes Legal Action After Facial Recognition Software Causes Wrongful Arrest

Alvi Choudhury, a 26-year-old software engineer from Southampton, is pursuing legal damages against two police forces after automated facial recognition technology incorrectly identified him as a burglary suspect located over 100 miles away. The erroneous match led to his arrest at his family home and a distressing 10-hour detention in police custody before he was released without charge in the early hours of the morning.

Technology Failure and Physical Discrepancies

Thames Valley Police utilized facial recognition software that allegedly matched Mr. Choudhury to a suspect involved in a £3,000 burglary in Milton Keynes. However, Mr. Choudhury vehemently disputes this identification, pointing to significant physical differences between himself and the individual captured on CCTV footage from the alleged crime scene.

He told reporters: 'I was very angry because the person in the video looked about ten years younger than me. Everything was different - his skin tone was lighter, he appeared to be around 18 years old, his nose was larger, he had no facial hair, his eyes were dissimilar, and his lips were smaller than mine.' The only apparent similarity was their curly hair.

Arrest Aftermath and Legal Proceedings

Mr. Choudhury was arrested in January by Hampshire Constabulary officers at his Southampton residence, an event witnessed by neighbors that caused considerable distress to his father. The ordeal left him unable to work the following day and has prompted his legal claim against both Thames Valley Police and Hampshire Constabulary.

During his custody, Mr. Choudhury presented evidence of work meetings in Southampton on the day of the Milton Keynes burglary, a location approximately 115 miles distant. He claims that when he questioned officers at Hampshire police station about the resemblance between himself and the suspect in the video footage, they responded with laughter.

Systemic Issues and Algorithmic Bias

The facial recognition technology employed by UK police forces utilizes a German algorithm procured by the Home Office, which scans approximately 19 million mugshots stored on the national database. Police conduct around 25,000 searches monthly using this system, though the National Police Chiefs' Council emphasizes that matches should be treated as intelligence rather than definitive fact.

Thames Valley Police maintained that the decision to arrest Mr. Choudhury followed both technological matching and human visual assessment. However, the force acknowledged that the error 'may have been the result of bias within facial recognition technology.'

Home Office research from December reveals concerning disparities in false positive rates across different ethnic groups. The technology produces false positives for black faces 5.5% of the time and for Asian faces 4% of the time, compared to just 0.04% for white faces - representing dramatically higher error rates for minority ethnic groups.

Previous Database Entry and Future Concerns

Mr. Choudhury's facial image was already present in the police database due to a previous wrongful arrest in 2021, when he was attacked during a night out in Portsmouth. He now worries that having a second mugshot on the system following this latest incident could increase his likelihood of future erroneous identifications.

This concern carries professional implications for Mr. Choudhury, who sometimes requires security clearance for government client work in his software engineering career. He expressed apprehension that the incident makes him appear 'dodgier' and could negatively impact his professional opportunities.

Broader Context of Facial Recognition Controversies

Police and crime commissioners have previously warned about 'concerning in-built bias' in facial recognition systems, noting that while there's no evidence of adverse impact in individual cases, 'that is more by luck than design.'

Last month, South Wales Police compensated a black man who was identified as a potential match to a stalking suspect despite ranking only 32nd on the facial recognition technology's list of suggested matches.

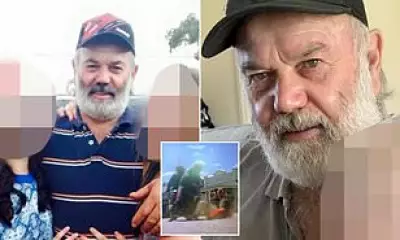

In a separate retail incident, 67-year-old Ian Clayton was asked to leave a Home Bargains store in Chester after facial recognition technology falsely identified him as having stolen items. Security company Facewatch, which operates the system, admitted Mr. Clayton should not have appeared on their watchlist and permanently removed his image from their database.

These cases highlight growing concerns about the reliability and potential biases of facial recognition technology in both law enforcement and commercial applications. Mr. Choudhury's legal action represents a significant challenge to police use of this increasingly controversial technology.

Hampshire Constabulary declined to comment on the ongoing case, while Thames Valley Police has been approached for additional statements regarding their facial recognition protocols and error mitigation procedures.