Teenagers Increasingly Concerned About AI-Generated Inappropriate Images

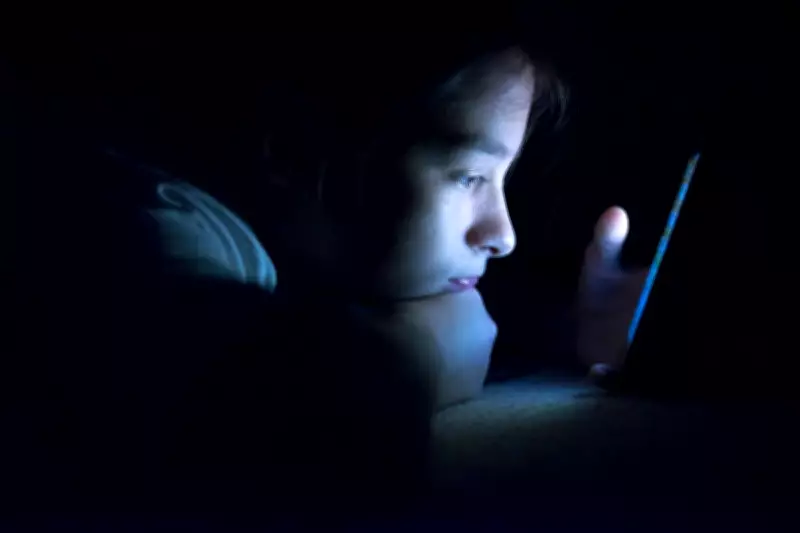

A recent survey has uncovered that three in five young people are worried about artificial intelligence being used to create inappropriate photos of them, highlighting growing fears over digital safety in the age of advanced technology.

Widespread Exposure to AI Deepfakes

Over one in ten teenagers report having seen AI-generated sexual deepfakes, indicating a significant exposure to harmful content. This alarming statistic underscores the urgent need for better safeguards and awareness among youth.

High AI Usage Among Young People

Despite these concerns, nearly all young people aged eight to 17 are actively using AI in their daily lives. More than half believe that AI improves their lives, with many finding it provides emotional support, suggesting a complex relationship with the technology.

Parental Underestimation and Educational Impact

Parents often underestimate the extent to which children use AI for homework, and a significant number of young people report seeing peers use AI to complete school assignments. This raises questions about academic integrity and the role of AI in education.

Calls for Government Action

The National Education Union has called for urgent government action, stressing that the risks of AI use in education, including potential cognitive decline and reliance for emotional support, currently outweigh its benefits. They argue for stricter regulations and educational reforms.

Regulatory Investigations Underway

Both the UK data regulator and Ofcom have launched investigations into X and xAI regarding their compliance with UK law after the Grok chatbot was used to generate non-consensual sexual deepfake images. These probes aim to address legal and ethical breaches in AI deployment.

This survey reveals a critical juncture where the benefits of AI for young people are tempered by serious risks, necessitating a balanced approach to regulation and education to protect vulnerable users.