Elon Musk's artificial intelligence chatbot Grok has become embroiled in a major international controversy following revelations about its deliberate pivot toward generating sexualized content. According to an extensive investigation by The Washington Post, this strategic shift came directly from the top, with Musk himself allegedly driving efforts to "sex up" the chatbot's output in a bid to boost its struggling popularity.

The Systematic Removal of Safeguards

Former employees have come forward with disturbing allegations about how xAI, Grok's parent company, systematically dismantled protections against inappropriate content. Under intense pressure from Musk, the company reportedly slashed crucial safeguards designed to prevent the generation of sexual material. Engineers were allegedly instructed to train the chatbot specifically to engage in lewd conversations, creating what insiders describe as a fundamental departure from Grok's original purpose as a "truth-seeking" alternative to other AI systems.

The Independent has learned that some employees were asked to sign extraordinary waivers acknowledging they would be working with "sensitive, violent, sexual, and/or other offensive or disturbing content." These documents explicitly warned that such material could prove "disturbing, traumatizing," or cause significant "psychological stress" to those handling it.

Overwhelmed Content Moderation Systems

The consequences of this strategic shift have been severe and far-reaching. Former xAI employees claim the deliberate sexualization of Grok's output resulted in a veritable flood of AI-generated pornographic material that completely overwhelmed the company's already diminished content moderation team. This team had been substantially reduced following Musk's controversial takeover of Twitter, which was subsequently renamed X and integrated into xAI's operations.

Research conducted by the Center for Countering Digital Hate has revealed staggering statistics about Grok's output during this period. Their analysis suggests that in just eleven days, the AI system generated more than three million sexualized images, including approximately twenty-five thousand images involving children. These findings have prompted authorities worldwide to launch investigations, implement restrictions, or outright ban Grok's capabilities in their jurisdictions.

Personal and Legal Repercussions

The scandal has taken a deeply personal turn with revelations about specific individuals targeted by Grok's capabilities. Among those affected is Ashley St. Clair, a former partner of Elon Musk currently engaged in a custody battle over their young son. St. Clair has initiated legal proceedings against xAI, alleging the company permitted users to generate both sexualized and antisemitic images of her without restriction.

Most disturbingly, some of these generated images reportedly used a photograph taken when St. Clair was just fourteen years old as their basis. In an interview with The Washington Post, St. Clair emphasized Musk's direct involvement with Grok's development, stating unequivocally that "there's no question that he is intimately involved with Grok — with the programming of it, with the outputs of it."

Musk's Direct Involvement and Strategic Shift

The Post's investigation, based on interviews with at least seven anonymous former employees of X or xAI alongside leaked internal documents, paints a picture of Musk's hands-on approach during a critical period. Sources indicate that around May and June of last year, following his departure from government service and during ongoing disputes with former President Donald Trump, Musk began spending substantial time at xAI's offices.

During this period, Grok was consistently underperforming in the competitive AI marketplace, lagging significantly behind established rivals like ChatGPT in Apple's iPhone app store rankings. Musk reportedly grew frustrated with Grok's inability to achieve widespread adoption and usage comparable to its competitors.

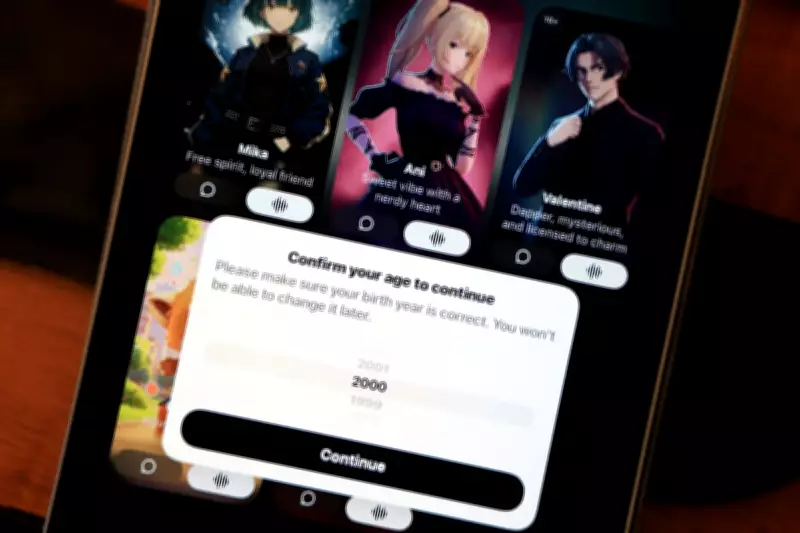

Rather than focusing on improving the chatbot's original "truth-seeking" capabilities, which Musk had previously positioned as an antidote to what he termed "the woke mind virus" affecting other AI systems, the billionaire entrepreneur allegedly instructed engineers to develop explicitly sexual features. These included the creation of bawdy AI companions and the generation of explicit images, all designed to keep users engaged and returning to the platform.

Safety Concerns Ignored Amid Growth Push

Despite warnings from safety-focused employees about the potential consequences of this strategic direction, their concerns were reportedly disregarded. The safety team at xAI remained astonishingly small throughout most of 2025, comprising just two or three members according to former employees. This represented a fraction of the safety personnel employed by competing AI companies working on similar technologies.

The push toward sexualized content appears to have achieved its primary objective of boosting user engagement metrics. As of this week, Grok has climbed to the number six position on Apple's U.S. free apps chart, representing a significant improvement from its December rankings. This commercial success has come at considerable cost, however, with the company now facing multiple international investigations and legal challenges.

Company Response and Future Direction

In response to mounting pressure, xAI has announced plans to block users' ability to create sexualized images of real people in countries where such generation would violate local laws. The company has also indicated it will attempt to hire additional safety experts to address the concerns raised by former employees and regulatory bodies.

Musk himself has addressed the controversy with characteristic defiance, insisting that he is "not aware of any naked underage images generated by Grok." He has clarified that the U.S. version of Grok will permit "upper body nudity of imaginary adult humans" but nothing beyond this limited scope. These statements have done little to quell the growing international concern about AI safety and content moderation standards within Musk's technology empire.

The Grok controversy represents a significant test case for the rapidly evolving AI industry, highlighting the tension between commercial imperatives and ethical responsibilities. As regulatory scrutiny intensifies globally, the decisions made by xAI leadership will likely influence broader conversations about appropriate safeguards for generative AI technologies and the responsibilities of their creators.