Entertainment

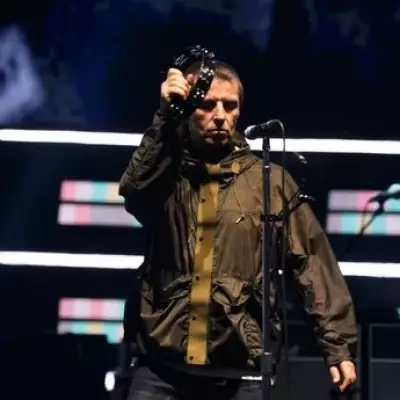

Liam Gallagher Calls Oasis Reunion Film a 'Romantic Comedy'

Liam Gallagher describes the new Oasis documentary as a 'romantic comedy with a bit of rock n roll,' featuring unseen footage and the brothers' first joint interview in 25 years.

Politics

Starmer Vows Social Media Crackdown After Teen Blackmail Horror

PM Keir Starmer pledges action on under-16 social media restrictions as victims share harrowing stories of blackmail and suicide linked to online harm.

Sports

Timber and Merino Out for Arsenal vs West Ham Title Clash

Arsenal face West Ham in a crucial Premier League match with Jurrien Timber and Mikel Merino ruled out. Mikel Arteta confirms both will miss the London Stadium showdown.

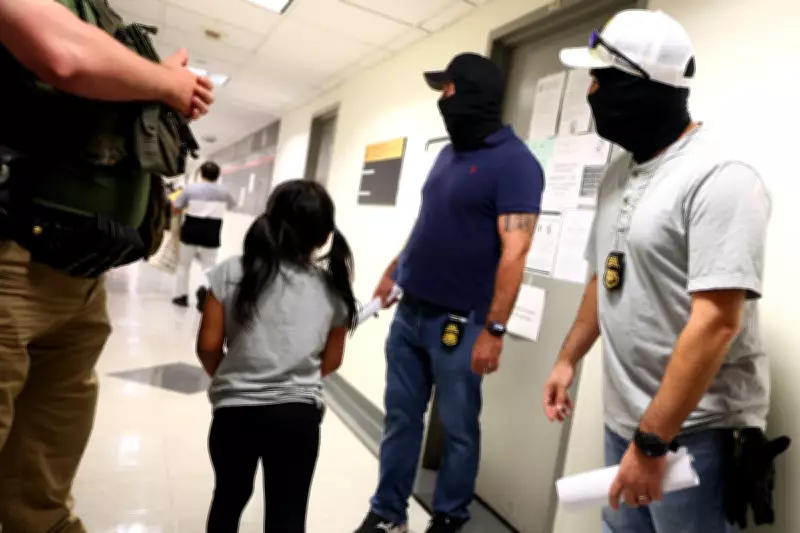

Crime

Man Arrested for Murder in Bristol Roof Garden Death of Anthony Clemmings

A 50-year-old man has been arrested on suspicion of murder after Anthony Clemmings, 54, was found dead in a Bristol roof garden. Police continue investigations.

Health

Weather

A Terrible Time for a Tractor Breakdown in Lincolnshire

A farmer in Brigg, Lincolnshire, faces a tractor breakdown at the worst possible time, disrupting birdfood sowing and a school visit, but the day ends with a positive outcome.

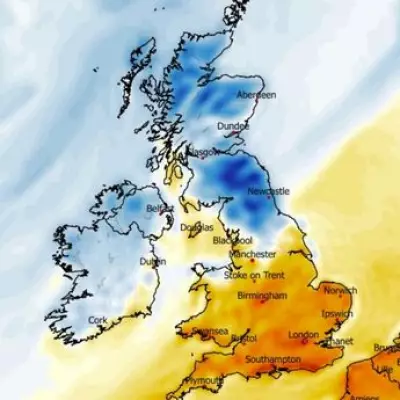

Met Office: 22C Saturday, 40 counties see above-average heat

The Met Office predicts temperatures up to 22C on Saturday, with 40 counties in England and Wales experiencing above-average heat. A north-south divide is expected.

Freak Storms and Waterspouts Batter Southern Spain

Southern Spain hit by flash floods and waterspouts as severe weather batters holiday hotspots. Residents stunned by marine tornadoes off La Manga.

Michigan Home Crushed by Tree Day After Sale

A house in St Clair Shores, Michigan, was severely damaged by a falling tree during a storm, just one day after being sold. The seller is expected to cover repairs.

Mount Dukono Eruption Kills Three, 20 Missing

Mount Dukono volcano in Indonesia erupted, spewing ash 10km high, killing three hikers and leaving 20 missing as search efforts continue.

Environment

Get Updates

Subscribe to our newsletter to receive the latest updates in your inbox!

We hate spammers and never send spam

Tech

UK Geography Weekend Special

Weekend special: 100 questions about UK geography. Perfect for students preparing for exams or anyone who loves learning about Britain's diverse landscapes.