California Defies Trump Administration with New AI Regulations

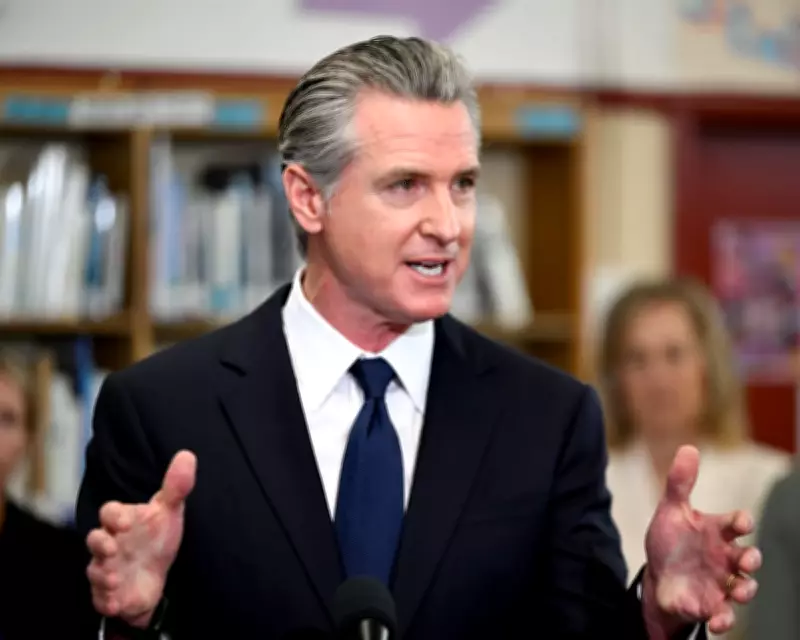

California Governor Gavin Newsom has signed an executive order establishing new artificial intelligence regulations for companies seeking state contracts, directly challenging former President Donald Trump's calls for minimal oversight of the industry. The order, signed on Monday, gives state agencies four months to develop comprehensive AI policies focused on protecting public safety and individual rights.

New Requirements for AI Companies

Under the new framework, artificial intelligence firms hoping to secure contracts with California must demonstrate specific safeguards. These include policies preventing AI systems from distributing child sexual abuse material and violent pornography. Companies must also show how their models avoid incorporating "harmful bias" and detail procedures to prevent "unlawful discrimination, detention, and surveillance."

The executive order further directs state officials to establish best practices for watermarking AI-generated or manipulated images and videos, addressing growing concerns about deepfakes and digital misinformation.

Newsom's Vision for Responsible Innovation

"California has always been the birthplace of innovation," Newsom stated in his announcement. "But we also understand the flip side: in the wrong hands, innovation can be misused in ways that put people at risk. California leads in AI, and we're going to use every tool we have to ensure companies protect people's rights, not exploit them or put them in harm's way."

The governor's action represents a significant escalation in the ongoing debate about how to regulate artificial intelligence technologies that have raised numerous public safety concerns while simultaneously promising economic transformation.

State-Level Regulation vs. Federal Opposition

California's move is part of a broader trend of state-level attempts to regulate the rapidly evolving AI industry. According to recent reports, states have passed more than 100 laws addressing various AI concerns, including measures to shield children from potentially harmful chatbots and prevent AI companies from using copyright-protected material without permission.

These state efforts directly contradict the Trump administration's national AI policy framework issued in December, which explicitly discouraged states from implementing their own regulations. "To win, United States AI companies must be free to innovate without cumbersome regulation," Trump's executive order stated. "But excessive state regulation thwarts this imperative."

The former president's framework directed the Department of Justice to establish an "AI Litigation Task Force" specifically tasked with challenging state AI regulations, setting the stage for potential legal conflicts between federal and state authorities.

Broader Implications for AI Governance

This regulatory clash highlights fundamental disagreements about how to balance innovation with safety in the artificial intelligence sector. While the Trump administration argues that excessive regulation could stifle American technological competitiveness, California officials contend that basic safeguards are necessary to prevent harm and protect vulnerable populations.

The debate extends beyond technical specifications to address broader societal concerns about how AI might degrade labor value, eliminate jobs, and potentially infringe upon civil liberties through surveillance and discrimination. California's approach suggests a growing recognition among state leaders that artificial intelligence requires thoughtful governance rather than purely market-driven development.

As the four-month policy development period begins, technology companies, civil rights advocates, and other stakeholders will be closely watching how California implements these new requirements and whether other states follow suit with similar regulatory frameworks.