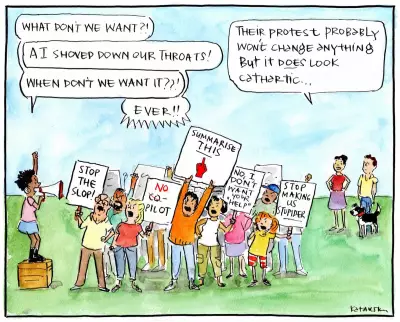

The Rise of AI-Generated Fruit Dramas on TikTok

If you have spent any significant time on TikTok recently, you may have encountered a peculiar and unsettling trend dominating social media feeds: AI-generated fruit dramas. These short videos feature anthropomorphic fruit characters engaging in a wide array of ethically problematic behaviours, from affairs and racist attitudes to the sexual assault of female characters. At first glance, these clips appear so bizarre and grotesque that they seem almost impossible to take seriously. However, their popularity tells a different story, with some accounts amassing hundreds of millions of views and millions of followers.

Why Are These Videos So Compelling?

One prominent account, known as ai.cinema021, has launched a parody series titled Fruit Love Island, attracting over three million followers. This content represents, at best, a wasteful affront to the art of animation and, at worst, a normalisation of racism and misogyny. The critical question remains: why do these videos have such a vast and dedicated fanbase? The answer lies in the sophisticated exploitation of core human psychological principles, combined with the addictive design features of platforms like TikTok.

Tapping into the Brain's Reward System

Short-form video feeds, including TikTok and Instagram Reels, operate on principles strikingly similar to those used in gambling systems. The human brain is exceptionally sensitive to novelty and unpredictability, both of which are closely linked to dopamine signalling in reward learning. When rewards are delivered unpredictably, behaviour becomes more persistent, a pattern known as variable reinforcement. This mechanism sustains repeated actions even when rewards are inconsistent, making it incredibly effective at keeping users engaged.

AI-generated slop videos offer rapid visual novelty and unexpected emotional turns, leaving viewers uncertain whether the next clip will be absurd, funny, tragic, or strangely compelling. Furthermore, these videos compress significant emotional experiences into mere seconds, moving swiftly from betrayal to sadness, revenge, and humour. This creates emotional volatility, which heightens arousal and sustains attention. Research consistently shows that emotionally charged content, particularly when it is negative or surprising, is far more likely to capture our attention than neutral material.

The Pull of the 'Kinda Wrong' Feeling

Many viewers report a sense that these fruit dramas feel off or unsettling. The characters are expressive yet often lack full coherence, and the narratives resemble human drama but are devoid of internal logic. This phenomenon relates to the concept of the uncanny valley, where near-human representations generate discomfort. Importantly, these videos rarely become disturbing enough to trigger avoidance; instead, they occupy a middle ground—strange enough to provoke curiosity but not uncomfortable enough to make viewers stop watching.

This creates cognitive tension, which, according to cognitive dissonance theory, motivates individuals to resolve inconsistencies. In this context, the way to alleviate tension is to continue watching in search of closure, with the mind persistently questioning: What is this, and where is it going? Additionally, the highly synthetic nature of the characters allows viewers to relax ethical judgement, as the scenarios feel fictional even when they mirror real social behaviours. Studies on moral disengagement indicate that people are more likely to overlook unethical messaging when harm appears abstract or indirect, enabling consumption without the discomfort that would arise with real people.

Influence Through Minor Interactions and Algorithms

Much like AI slop, social media algorithms do not prioritise meaning or quality; they prioritise content that captures attention. Recommendation systems are driven by metrics such as watch time, completion rate, and interaction. High engagement leads to greater visibility, which in turn encourages the production of more similar content, creating a powerful feedback loop. From an AI governance perspective, these videos highlight an often-overlooked risk: generative systems do not merely produce content; they can gradually shape our behaviours, often without our conscious awareness. This aligns with broader concerns in AI ethics regarding behavioural influence and manipulative design on a large scale.

Reclaiming Your Time and Attention

Avoiding social media entirely is unrealistic for many individuals, but small, intentional changes can reduce the pull of AI-generated brain rot. One effective approach is to introduce a brief pause before scrolling to the next video. Even a momentary interruption can weaken the reward loop in your brain, making it easier to put your phone down. When you notice yourself thinking, This feels pointless or This is strange, that is the optimal time to stop. In some cases, a digital detox might be beneficial.

You can also retrain your algorithm by quickly skipping or selecting not interested on videos you do not wish to see, replacing passive scrolling with intentional viewing by seeking out specific content. Finally, create friction by disabling automatic playback, limiting access to feeds through app notification settings, or removing the app from your home screen. AI fruit videos may seem trivial and absurd, but they reveal something crucial about our digital environment. As generative systems scale up, they will only become more adept at capturing and directing our attention. Understanding the psychology behind this phenomenon is the first essential step toward resisting it.