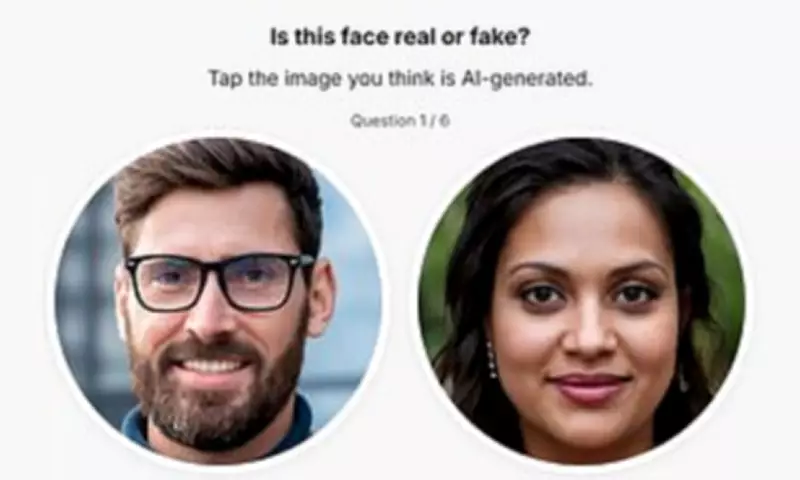

If you believe you can reliably distinguish between a real human face and one created by artificial intelligence, you are likely mistaken, according to a comprehensive new study. Researchers from the University of New South Wales in Australia have found that individuals consistently overestimate their ability to detect digitally fabricated faces, a dangerous misconception in an era where AI-generated imagery is becoming nearly flawless.

The Illusion of Discernment

The study, which involved 125 participants completing an online facial recognition test, revealed that ordinary people performed only marginally better than random chance when attempting to identify AI-generated faces. Even more strikingly, individuals classified as 'super recognisers'—those with exceptional face-recognition abilities—outperformed others by only a slim margin, demonstrating that expertise in human facial recognition does not necessarily translate to detecting synthetic counterparts.

From Obvious Glitches to Perfected Imperfections

Dr. Amy Dawel, one of the study's authors, explained the fundamental shift in how AI-generated faces now deceive observers. 'Ironically, the most advanced AI faces aren't given away by what's wrong with them, but by what's too right,' she noted. 'Rather than obvious glitches, they tend to be unusually average—highly symmetrical, well-proportioned and statistically typical.'

This represents a significant departure from earlier AI face generation, where telltale signs included distorted teeth, glasses merging unnaturally with skin, or improperly attached ears. Modern systems have largely eliminated these obvious visual mistakes through technological advances, creating faces that appear 'too good to be true' rather than obviously flawed.

The Confidence Gap

Co-author Dr. James Dunn highlighted the concerning disconnect between perception and reality. 'What was consistent was people's confidence in their ability to spot an AI-generated face, even when that confidence wasn't matched by their actual performance,' he stated. This overconfidence persists despite the fact that facial qualities traditionally associated with attractiveness—such as symmetry and average proportions—have now become potential indicators of artificiality.

Practical Implications for Digital Security

The researchers emphasized that this misplaced confidence has serious real-world consequences. Relying on visual judgment alone is no longer reliable in contexts ranging from social media and online dating to professional networking and recruitment, where individuals often assume they can intuitively identify fake profile pictures.

This vulnerability extends to organizations as well, potentially exposing them to sophisticated scams, fabricated identities, and fraudulent profiles. 'There needs to be a healthy level of scepticism,' Dr. Dunn advised. 'For a long time, we've been able to look at a photograph and assume we're seeing a real person. That assumption is now being challenged.'

Beyond Traditional Detection Methods

The study suggests that traditional approaches to identifying synthetic faces—such as looking for blurry backgrounds or distorted features—are becoming obsolete as face-generation technology continues to improve. Instead of teaching people specific tricks to spot AI faces, the researchers advocate for updating fundamental assumptions about digital imagery.

'As face-generation technology continues to improve, the gap between what looks plausible and what is real may widen—and recognising the limits of our own judgement will become increasingly important,' Dr. Dawel explained.

A New Category of Expertise

Interestingly, the research uncovered evidence of what might be considered 'super-AI-face-detectors'—individuals who demonstrated exceptional ability to identify synthetic faces regardless of whether they were classified as super recognisers for human faces. Some participants without super-recogniser status actually outperformed those with the designation, indicating this represents a distinct skill set rather than simply an extension of traditional facial recognition abilities.

'Our research has revealed that some people are already sleuths at spotting AI-faces,' Dr. Dunn added. 'We want to learn more about how these people are able to spot these fake faces, what clues they are using, and see if these strategies can be taught to the rest of us.'

The Future of Digital Authentication

The findings, published in the British Journal of Psychology, highlight the urgent need for new approaches to digital authentication and verification. As AI technology advances, the visual rules that have guided our assessment of photographic authenticity for decades are becoming increasingly unreliable.

This research serves as a crucial warning about the limitations of human perception in an age of sophisticated digital fabrication, emphasizing that what we perceive as 'normal' or 'attractive' in facial features may actually be the very characteristics that signal artificial creation.