Social Media Giants Confront Legal Accountability for Youth Harm

After years of evasion, social networks like Facebook and Instagram are finally being compelled to acknowledge their detrimental impact on children's mental wellbeing. A pivotal jury decision this week in New Mexico determined that Meta's platforms actively contribute to psychological harm among young users, marking a significant shift towards corporate responsibility.

Landmark Verdicts Signal Changing Legal Landscape

The New Mexico ruling represents merely the initial volley in what experts predict will become a cascade of legal challenges demanding accountability from technology behemoths. Simultaneously, a Los Angeles jury delivered an unprecedented victory for a young woman identified as Kaley, who successfully sued both Meta and Google over childhood social media addiction.

Jurors concluded that these companies intentionally engineered addictive platforms that damaged the mental health of the now 20-year-old plaintiff. This dual legal development suggests a fundamental transformation in how courts perceive social media corporations' obligations toward their most vulnerable users.

From Digital Town Squares to Designed Environments

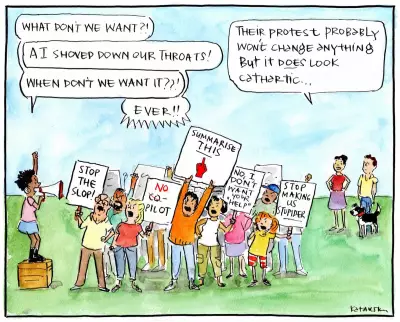

For decades, Meta and its competitors portrayed their platforms as neutral digital town squares where they merely provided space without responsibility for user behavior. This analogy has crumbled under scrutiny, as any competent architect understands that designed environments inevitably shape human conduct.

Social media companies have essentially constructed vast, unpredictable digital voids without adequate safeguards, then feigned surprise when users, particularly children, suffered psychological consequences from falling into these engineered abysses.

The Contradiction of Content Moderation

To their partial credit, influenced by activist pressure and internal advocacy, social networks have implemented some content moderation systems. Meta's platforms occasionally remove extreme harmful material, yet these efforts remain inconsistent and fundamentally inadequate.

The fundamental flaw persists: these companies treat protective measures as exceptions rather than foundational design principles. Two decades of algorithmic experimentation, growth hacking strategies, and deliberately addictive application designs have demonstrated that the core architecture of these platforms requires careful constraint, not merely superficial content filtering.

Trillions Earned, Responsibility Evaded

Meta and similar corporations have amassed astronomical wealth—literally trillions of dollars—primarily harvested from user engagement and personal data, with young people's attention serving as particularly valuable currency. An entire generation has effectively been raised within these digital ecosystems, making the demand for reciprocal responsibility not merely reasonable but ethically imperative.

While Meta has announced plans to appeal the recent verdicts, utilizing the vast resources accumulated from the very attention economy now under scrutiny, the legal and cultural momentum appears irreversible. These cases coincide with growing public awareness about how social media damages attention spans and ethical frameworks.

The Path Forward Requires Sustained Pressure

Meaningful change will necessitate continuous public advocacy alongside corporate introspection. As society grows increasingly conscientious about intentional technology engagement, the pressure mounts for platforms to redesign their fundamental architectures rather than merely applying band-aid solutions to systemic problems.

The question remains whether social networks will genuinely accept responsibility or continue resisting through legal maneuvers and public relations campaigns. What's undeniable is that the era of unquestioned digital exploitation is concluding, replaced by demands for ethical design and corporate accountability that should have emerged years earlier.