In a significant legislative move, a Democratic senator has introduced a bill designed to impose strict limitations on the Pentagon's utilisation of artificial intelligence, particularly concerning surveillance operations and lethal autonomous weapons systems. The AI Guardrails Act, formally presented on Tuesday, emerges against a backdrop of escalating military reliance on AI technologies during the ongoing conflict with Iran, coupled with contentious ethical disputes between the Department of Defense and leading AI research laboratories.

Legislative Response to Rapid AI Integration

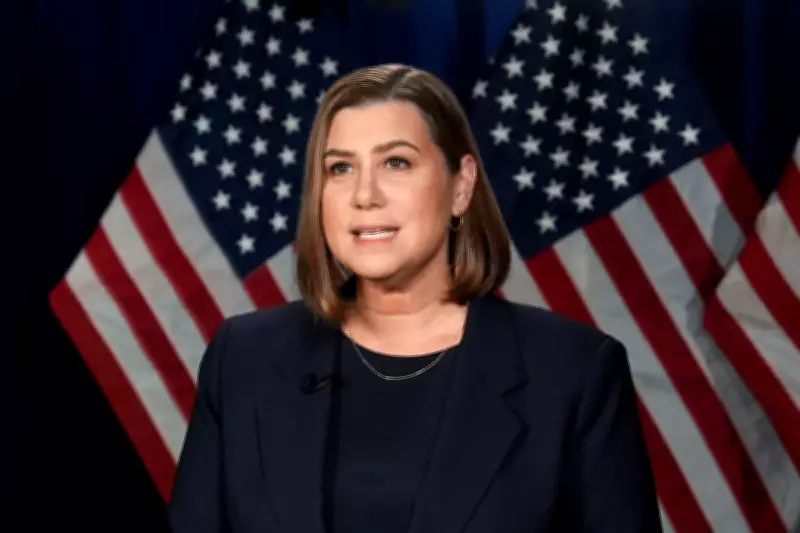

Senator Elissa Slotkin of Michigan, a former CIA analyst with a history of challenging executive authority, is spearheading the proposed legislation. The bill explicitly prohibits the military from employing AI to conduct mass surveillance on American citizens or to authorise kill strikes without direct human oversight. Furthermore, it extends these restrictions to encompass decisions involving the launch of nuclear weapons, mandating that such critical actions remain under human control at all times.

"If our political landscape were more stable and functional, we would be establishing comprehensive boundaries around AI applications without delay," Senator Slotkin remarked during a recent Armed Services Committee hearing. "Currently, there exists a profound absence of regulatory frameworks and legal guidelines governing these technologies. This legislative gap is not the fault of military personnel; it is a bipartisan failure that we must address urgently."

Pentagon's Stance and Ethical Clashes

The Department of Defense has responded by asserting that its current practices already align with the principles outlined in the AI Guardrails Act. Defense Department official Sean Parnell emphasised in a public statement last month, "The Department of War has no intention of leveraging AI for illegal mass surveillance of Americans, nor do we seek to develop autonomous weapon systems that operate independently of human judgement."

However, this assurance contrasts sharply with recent breakdowns in negotiations between the Pentagon and AI firm Anthropic. The dispute centred on ethical provisions regarding the integration of AI into defence systems, with Anthropic demanding guarantees that its technology would not be utilised for mass surveillance or fully autonomous weapons. The Pentagon maintained that it would only deploy Anthropic's models for lawful purposes, but the firm ultimately withdrew, citing ethical reservations.

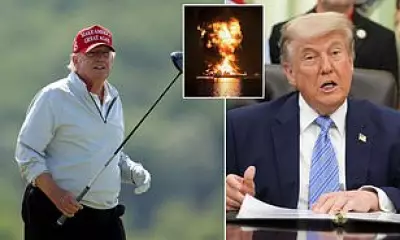

Anthropic's CEO, Dario Amodei, declared last month that the company "cannot in good conscience" accept the terms proposed by the Defense Department. In retaliation, Defense Secretary Pete Hegseth labelled Anthropic a supply chain risk, prompting the Trump administration to order federal agencies to cease using its technology within six months. Anthropic has since initiated legal proceedings to contest this designation, arguing that the administration's actions are ideologically motivated.

AI's Pivotal Role in Modern Warfare

The urgency of Senator Slotkin's bill is underscored by the extensive integration of AI into U.S. military operations, particularly in the Iran campaign. A key technological asset has been Palantir's Maven system, which synergises with Anthropic's Claude large language model to analyse intelligence, mapping data, and other critical information streams. This integration provides commanders with real-time battlefield insights and assists in identifying potential targets with unprecedented speed.

"These advanced systems enable us to process enormous datasets in mere seconds, allowing leadership to distill essential information and make informed decisions more rapidly than adversaries can respond," explained Central Command's Admiral Brad Cooper last week. "While human operators retain ultimate authority over targeting decisions, AI tools have revolutionised processes that previously required hours or even days, compressing them into seconds."

Other prominent technology entities, including OpenAI, Google, Elon Musk's xAI, and Anduril, are either already supplying or have agreements to provide the U.S. military with various defence-oriented AI systems. This widespread adoption has intensified scrutiny, especially following a probable U.S. strike that destroyed a girls' primary school in Minab, Iran, resulting in the deaths of at least 175 individuals, many of them children.

The AI Guardrails Act represents a proactive attempt to establish ethical and operational boundaries in an era where technological advancement outpaces regulatory oversight. As debates over autonomous weapons and surveillance capabilities continue to evolve, this legislation highlights the growing imperative to balance military efficacy with fundamental ethical considerations and legal accountability.